With Scotland’s Holyrood election just a week away, SES Co-Investigator Professor Christopher Carman asks whether Scottish voters are ready to navigate an information environment that is increasingly polluted — and highlights what the Electoral Commission is doing about it.

Scotland goes to the polls on 7 May 2026. Most voters will be familiar with the usual features of campaign season: leaflets through the door, leader debates on TV and arguments about the NHS. What they may be less prepared for is the new, murkier territory into which our information environment has drifted — one populated by AI-generated fake news, doctored videos, foreign-amplified social media posts, and the creeping possibility that a clip of a politician saying something outrageous may simply never have happened.

Democratic elections depend on voters being able to access accurate information. When that information is systematically polluted, it is not just individual candidates who lose out — it is public confidence in the legitimacy of democracy itself.

It Has Already Arrived in Scotland

It is tempting to think of political disinformation as something that happens elsewhere. But it has already arrived in Scotland, and in forms that should give us pause.

Consider Reform UK’s Facebook advertising campaign against Anas Sarwar during the 2025 Hamilton, Larkhall and Stonehouse by-election. Reform spent an estimated £20,000–£25,000 generating over a million online impressions on a selectively edited video of the Scottish Labour leader, overlaid with text claiming he had said he would “prioritise the Pakistani community.” In the original footage Mr Sarwar said no such thing. Whether this was a case of selective editing — one of the oldest tricks in the political advertising playbook — or genuine disinformation is a question you can answer for yourself. What is not in doubt is that the tools of manipulation have become cheap, accessible, and effective.

Beyond selective editing, the horizon looks more challenging still. In Ireland in 2025, a deepfake video falsely depicted a presidential candidate withdrawing from the race days before polling day. In November 2025, in the U.S. Fox News acknowledged it had reported as fact a story based on an AI-generated deepfake video. These are not isolated incidents.

The March 2026 Rycroft Report, the independent review into foreign financial influence and interference in UK politics, concluded bluntly that “we are, indeed, already experiencing ‘information warfare'” and that “our defences are worryingly weak.” While Rycroft was focused primarily on foreign interference rather than disinformation in general, the two are increasingly intertwined — and with a Scottish Parliament election a week away, it makes for uncomfortable reading.

What Do Scottish Voters Actually Know and Think?

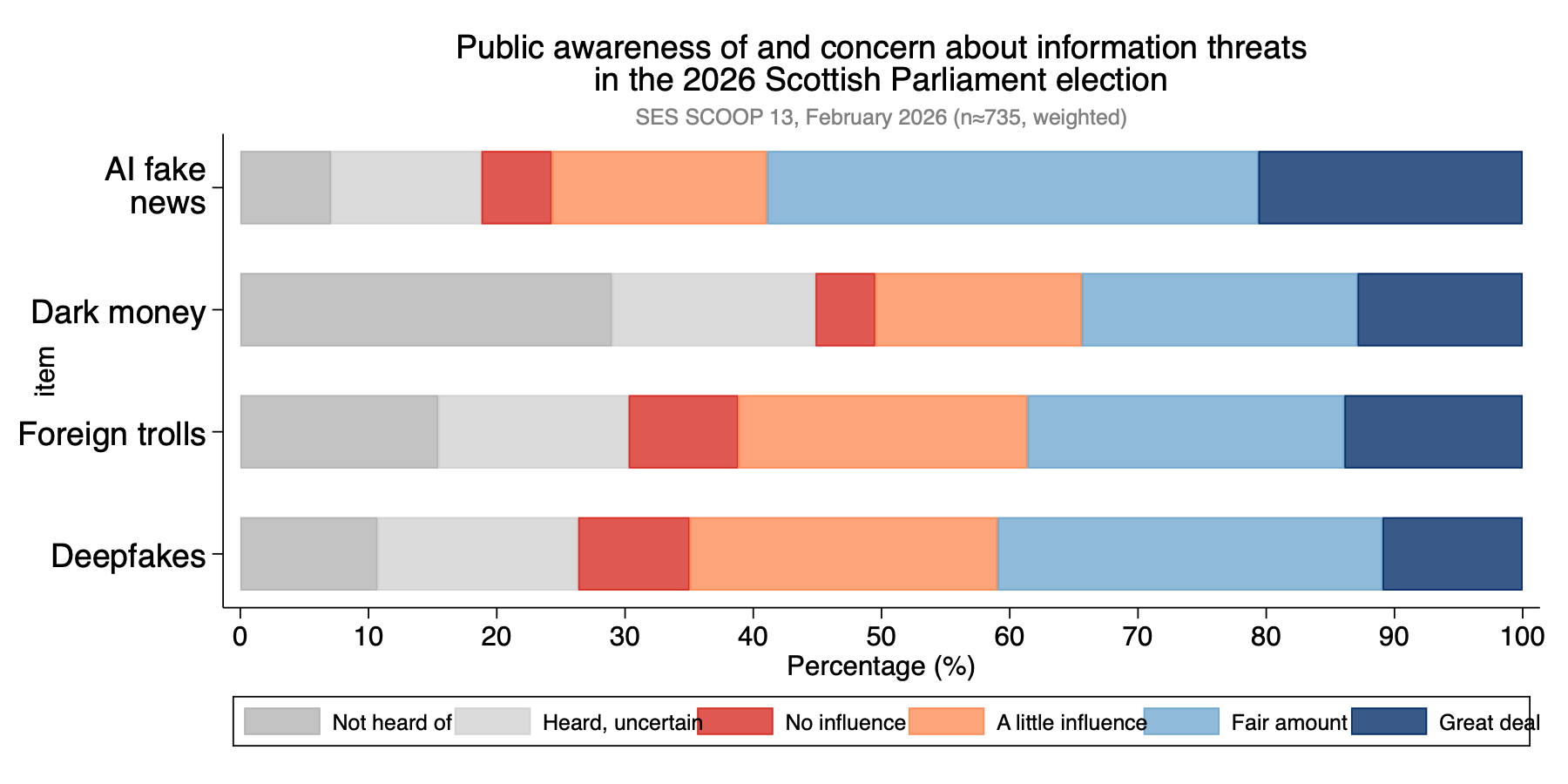

Faced with this shifting landscape, we at the Scottish Election Study wanted to know: how aware are Scottish voters of these threats, and how worried are they? In our February 2026 SCOOP opinion monitor survey — conducted by YouGov with a nationally representative sample of 1,517 Scots — we asked respondents how much influence they thought each of four specific threats would have on the outcome of the May election. Crucially, we also asked whether they had even heard of each threat, allowing us to separate awareness from concern. The chart below tells the story.

AI-generated fake news is both the best-known and most feared: almost all respondents had heard of it, and the majority expected it to have at least a fair amount of influence on the election outcome. Deepfakes show a similar pattern — high awareness, significant concern.

Dark money is the outlier. Around one in three Scottish voters — roughly 30% — had never heard of the term. The problem here is not scepticism among those who are informed, but simply that a large chunk of the electorate has not yet encountered the concept at all. You cannot communicate the risks of dark money in political financing to an audience that does not know what it is.

Who Is Most Concerned?

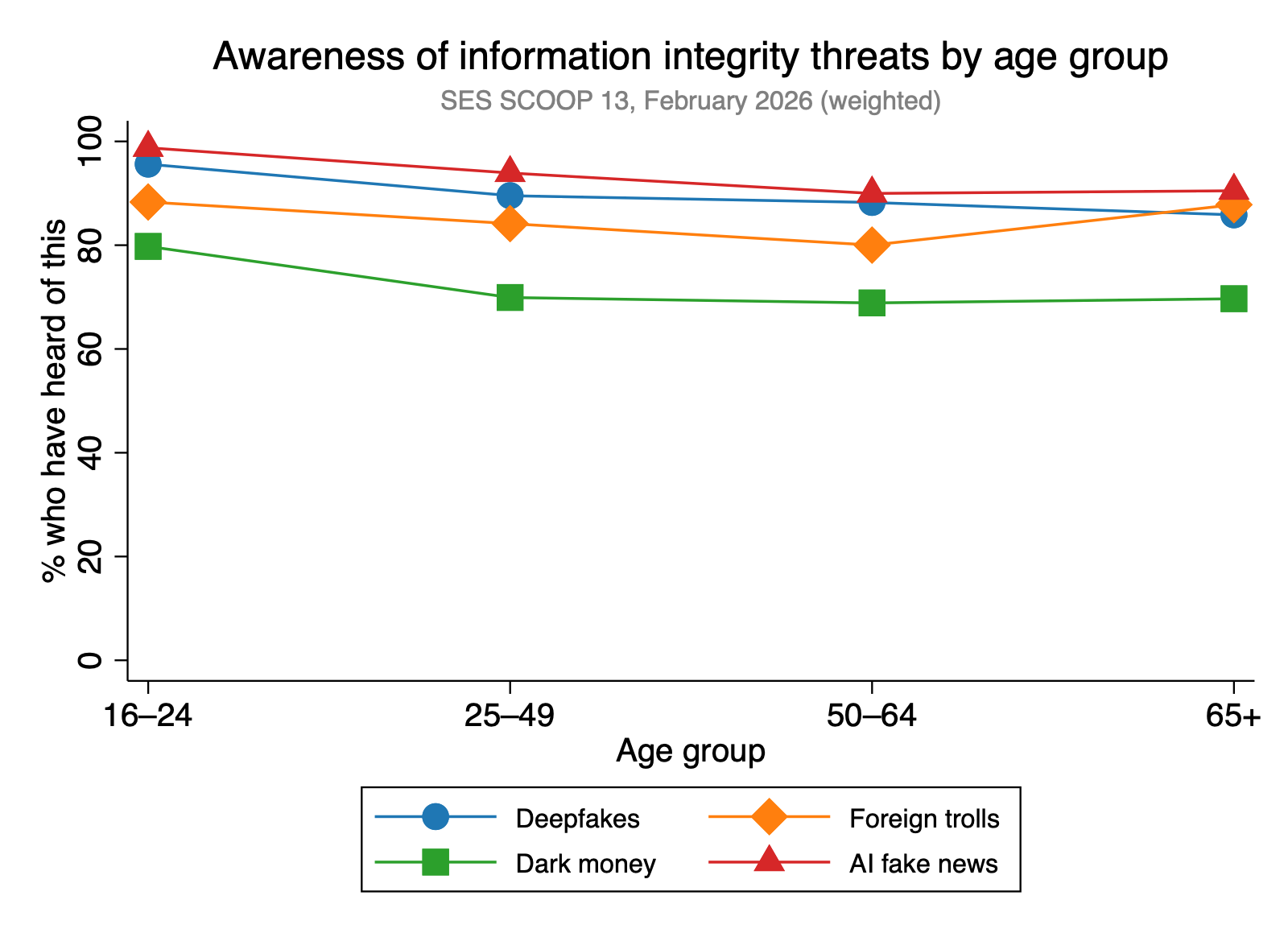

It is tempting to assume that younger, more digitally engaged voters are more aware of AI-driven threats. As the chart below shows, the data do not bear this out.

For AI fake news, deepfakes, and foreign trolls, awareness is high and relatively flat across all age groups. The 16–24 group is only slightly more aware than those aged 65 and over. The more revealing variation is political, not demographic. Constitutional identity appears to function as a filter on which threats feel salient — voters intending to vote No in a current independence referendum are significantly less likely to have heard of deepfakes and AI fake news, even after controlling for age, gender, and social grade.

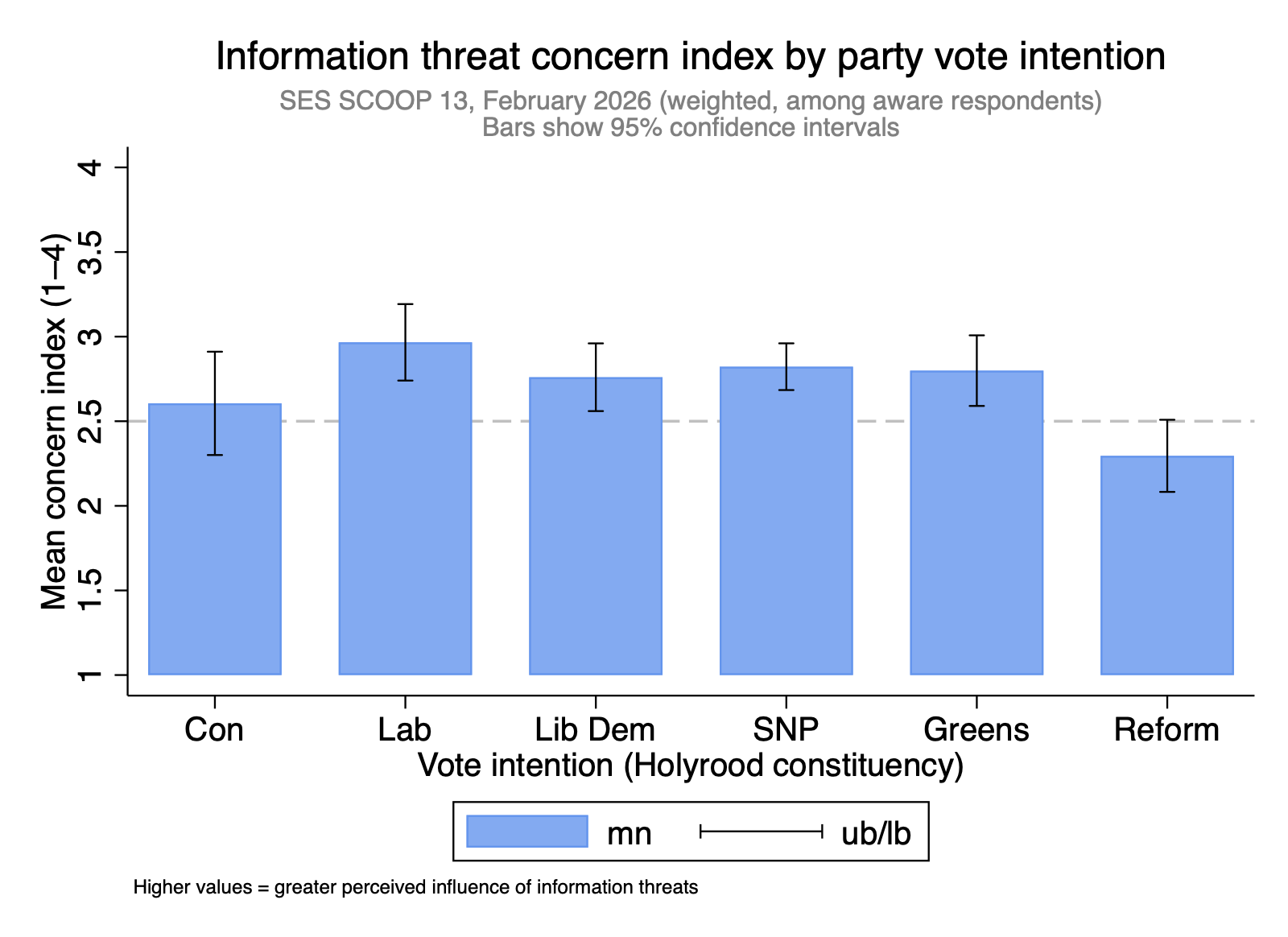

Even more striking is what we find when we look at party vote intention.

Labour voters express the highest concern about information threats; Reform UK Scotland voters the lowest — indeed, they are the only group whose mean concern falls at or below the scale midpoint. This is somewhat counterintuitive: Reform’s public rhetoric frequently invokes themes of elite manipulation and shadowy political forces. Yet Reform voters are distinctively sceptical that the specific mechanisms measured here will shape the 2026 Scottish election. The most plausible interpretation, consistent with a body of research on motivated reasoning in political psychology, is that people tend to worry about information manipulation when they believe it is being used against their own side — and to dismiss it when they do not. (This might explain why, in the wake of Reform’s Anas Sarwar video, Labour supporters express the highest level of concern.)

Information Threats and Democratic Confidence

Perhaps our most significant finding goes beyond awareness and concern. In a statistical model controlling for age, gender, social grade, party vote intention, and constitutional preference, people who are more worried about information integrity threats are significantly less satisfied with how democracy is working in Scotland (β = −0.25, p<0.001).

We cannot establish causality from a single survey — it is possible that general democratic dissatisfaction makes people more sensitive to perceived threats, rather than the reverse. But the correlation holds independently of partisanship and constitutional preference. People who are worried about deepfakes and AI fake news appear to be expressing a concern about the health of the electoral process itself, not just about which party wins. That matters. Tackling the information environment is not only about preventing voters from being misled by a specific false claim — it is about maintaining the basic conditions under which people feel that elections are legitimate.

What the Electoral Commission Is Doing About It

Those responsible for safeguarding our elections are not sitting still. The Electoral Commission has launched an innovative pilot to detect political deepfakes ahead of the May elections in England, Scotland and Wales, delivered in partnership with the Home Office’s Accelerated Capability Environment. The system monitors online content for AI-generated audio and video intended to mislead voters or falsely depict candidates; all flagged content is reviewed by a human analyst before any action is taken. The Commission will share its findings after the elections.

Sam Hartley, Director of Policy, Research and Voter Engagement at the Electoral Commission, set out the rationale at the 7 April Stevenson Lecture at the University of Glasgow: while a deepfake has not yet meaningfully affected a UK election, the Commission is determined to act proactively. As he noted, existing research finds that 59% of young people already find it hard to tell what is true or fake online regarding politics — and during the 2024 UK general election, over half of voters surveyed reported encountering misleading information about parties or candidates.

As Hartley also made clear, the Commission cannot do this alone.

What Can You Do?

At the individual level, treat surprising or emotionally charged content with scepticism — especially short video clips of candidates saying something that seems out of character, or posts from recently created accounts pushing the same claim at volume. Before sharing anything, check whether it can be verified from multiple independent sources. Fact-checking organisations such as Full Fact do invaluable work flagging viral false claims in real time.

If you think you have spotted a deepfake or piece of electoral disinformation, report it — to the platform directly, or to the Electoral Commission via electoralcommission.org.uk.

The Bottom Line

The information environment Scottish voters are navigating this week is genuinely more hazardous than in any previous Holyrood election — not because disinformation is new, but because the tools for creating and amplifying it have become faster, cheaper, and more convincing than ever before.

Our data suggest that Scottish voters are broadly aware of this, and broadly worried — particularly about AI-generated fake news and deepfakes, which transcend the party and constitutional divides that structure almost everything else in Scottish political opinion. Concern about information integrity is, remarkably, one of the few things that crosses political divides. The Electoral Commission’s deepfake detection pilot and the wider conversation sparked by the Rycroft Report are steps in the right direction. But the honest answer is that this is a fast-moving problem and our institutions are struggling to catching up.

In the meantime, the best defence any voter has is the oldest journalistic maxim in the book: if in doubt, check it out.

The SES SCOOP 13 data discussed in this post were collected by YouGov, 11–18 February 2026, with a nationally representative weighted sample of 1,517 adults (16+) in Scotland. The information integrity battery was asked of a random half-sample (n≈735). Full data and topline results are available at scottishelections.ac.uk/scoop-monitor/. The author presented these findings at the 7 April 2026 Stevenson Trust Lecture, University of Glasgow, alongside Sam Hartley (Electoral Commission).